AI CERTS

11 hours ago

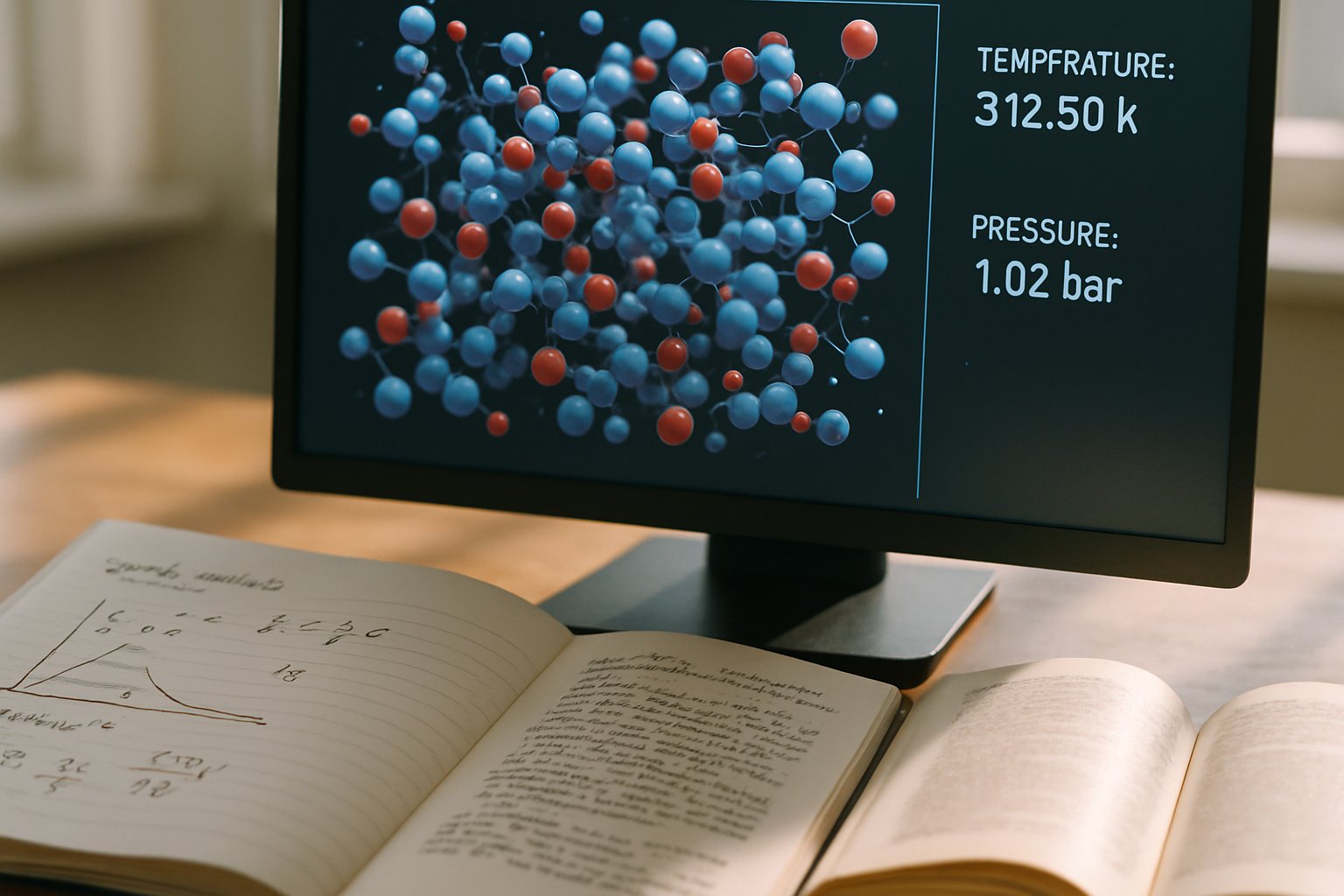

Chemistry AI Simulation Breakthroughs in High-Pressure Modeling

Therefore, microsecond trajectories and thousand-atom cells are becoming routine rather than aspirational. This article explores the architectures, physics, scalability, and limitations guiding the field between 2025 and 2026. Moreover, we outline skills and certifications that help professionals ride this emerging wave.

In contrast, we highlight gaps that still challenge deployment inside industrial laboratories. Finally, we examine how breakthroughs inform studies of Planetary cores and quantum mechanics under compression.

AI Frameworks Revolutionizing Simulation

DeePMD-kit v3 headlines the production lineup by supporting multiple ML backends and efficient GPU scaling. Furthermore, NequIP's April 2025 overhaul introduces optimized kernels that keep equivariant models fast on multi-node clusters. MACE and Allegro families complement this ecosystem by offering alternative symmetry handling and foundation models. Consequently, Chemistry AI Simulation developers now select architectures according to accuracy, data efficiency, and hardware constraints.

Equivariant graph networks reduce training data by up to three orders of magnitude, according to Nature Communication reports. Meanwhile, chemtrain-deploy pushes trained potentials into LAMMPS, enabling million-atom molecular dynamics campaigns. The collective result is a vibrant, interoperable stack that accelerates discovery cycles. Researchers still anchor training sets in rigorous quantum mechanics to secure transferability.

Collectively, these frameworks form the backbone of Chemistry AI Simulation research. However, accuracy improvements rely on innovations inside the models themselves.

Equivariant Models Boost Accuracy

Equivariant networks sit at the core of Chemistry AI Simulation accuracy gains. Therefore, they capture vectorial interactions critical under anisotropic compression. NequIP, MACE, and Allegro treat forces as tensors, aligning predictions with fundamental quantum mechanics. Consequently, energy errors often fall below five meV per atom across diverse datasets.

Longer timescale fidelity emerges because fewer spurious artifacts accumulate over microsecond trajectories. In contrast, older scalar descriptor models required larger training corpora yet yielded lower accuracy.

Equivariance trims data costs while safeguarding physical correctness. Nevertheless, missing long-range physics previously limited their scope.

Long Range Physics Advances

High-pressure phases often involve partial charges, dipoles, and polarization. Long-range electrostatics remained a blind spot for many Chemistry AI Simulation potentials until recently. Bingqing Cheng's Latent Ewald Summation bridges this gap by learning charges from energy labels. Moreover, model-agnostic augmentation embeds Coulombic terms without sacrificing GPU throughput.

Subsequently, ionic and interfacial systems gained millielectron-volt accuracy at extreme conditions. Researchers studying Planetary cores now resolve proton hopping and superionic transitions with confidence. These improvements broaden the chemical palette reachable computationally.

Long-range fixes complete the physical foundation of modern potentials. Scaling capabilities then determine practical impact.

Scaling To Planetary Pressures

Speed matters when simulating gigapascal environments. DeePMD and DNN@MB-pol achieved microsecond trajectories for 512 water molecules during 2025. Furthermore, optimized kernels yield three-order-of-magnitude wall-clock savings versus traditional ab-initio molecular dynamics. Consequently, researchers accessed previously elusive liquid-liquid transitions.

Chemistry AI Simulation campaigns now scale to millions of atoms on GPU clusters, matching experiments. Meanwhile, active learning workflows curtail costly data generation by triggering DFT calls only when uncertainty spikes.

- 10^3–10^6× speedups cut simulation cost relative to AIMD.

- Microsecond trajectories reveal kinetic pathways during simulation of liquid transitions.

- Million-atom cells capture grain boundaries under compression shocks.

Industry groups now sponsor Chemistry AI Simulation benchmarks on national supercomputers. These scaling feats bring Chemistry AI Simulation nearer to experimental planetary studies. Yet limitations still temper uncritical adoption.

Limitations And Open Questions

Transferability tests reveal certain Chemistry AI Simulation models falter under novel conditions. Models can soften disastrously when pushed outside trained pressure or temperature domains. Moreover, experimental benchmarks above 100 GPa are scarce, complicating validation efforts. Therefore, researchers must cross-validate against multiple references and maintain active learning loops.

Quantum mechanics effects like electronic correlation in metallic hydrides can still escape current potentials. Additionally, long-range fixes require careful parameter tuning for mixed metallic-ionic systems. Computational cost also resurfaces during distributed training, demanding access to large GPU allocations.

Limitations highlight the ongoing need for rigorous benchmarking and cross-disciplinary collaboration. Consequently, upskilling becomes essential for teams deploying these tools.

Skills And Certification Pathways

Teams need combined expertise in physics, data science, and software engineering. Professionals can boost expertise through the AI Engineer™ certification. Consequently, holders gain structured knowledge of model lifecycle management, data pipelines, and performance tuning.

Robust deployment of Chemistry AI Simulation still demands disciplined software practices. Meanwhile, emerging workshops teach active learning strategies and HPC deployment. Planetary cores experts increasingly collaborate with computer scientists during these sessions. Subsequently, cross-functional teams reduce iteration cycles and publication time.

Targeted training accelerates adoption while improving reproducibility. The field now moves toward broader industrial uptake.

Conclusion And Forward Outlook

Chemistry AI Simulation has shifted high-pressure research from ambitious to accessible. Equivariant architectures, long-range corrections, and scalable tools collectively extend time and length scales. Furthermore, concrete demonstrations inside water and superhydride studies validate scientific payoff. Nevertheless, model transferability and experimental scarcity still impede definitive predictions for some Planetary cores scenarios.

Therefore, continued benchmarking, active learning, and workforce development remain critical next steps. Readers should explore certification paths and contribute to open data initiatives to propel the discipline. Act now by enrolling in the linked AI Engineer™ program and join the next computational breakthrough.