AI CERTS

4 hours ago

Deepfake Video Propaganda: Rising Threats and Detection Gaps

This article examines the surge, assesses National security implications, and maps emerging countermeasures. Along the way, we spotlight detection research, evolving regulation, and the role of corporate trust building. Readers will also learn where professional certifications can help them steer responsible AI strategy. However, every promise of creative freedom now carries an equally potent risk of deception. Understanding both sides is essential for leaders confronting the Deepfake Video age.

AI Propaganda Surge Today

Google’s Veo 3 went global in July 2025 and changed the content economy overnight. Within eight weeks users created over 70 million clips, according to Google Cloud metrics. Consequently, moderation teams faced a flood of synthetic riot scenes, racist rants, and election lies. TIME investigators quickly demonstrated how a short text prompt could yield staged ballot shredding clips. Meanwhile, watchdogs documented the material spreading across YouTube, TikTok, and fringe forums before takedowns. Microsoft Threat Intelligence highlighted early evidence of state actors inserting Deepfake Video into influence operations.

In contrast, many benign creators used the same models for advertising, education, and personal storytelling. Such dual-use mechanics complicate policy responses because banning a model would stifle legitimate creativity. Margaret Mitchell from Hugging Face noted that realistic audio sync makes propaganda far more persuasive. Therefore, the scale, speed, and realism together mark a decisive break from prior misinformation cycles. The surge shows how quickly synthetic media tools diffuse. However, deeper risks unfold in National security arenas, which we examine next.

Threats To National Security

Defence analysts treat algorithmic propaganda as a strategic threat equal to cyber intrusion. Nation-state groups like Storm-1376 already paired AI anchors with hostile narratives during Taiwan’s 2024 vote. Additionally, Veo 3 misuse offers adversaries faster production than traditional covert studios ever allowed. Synthetic clips can fabricate troop movements, chemical attacks, or surrender announcements, eroding battlefield morale instantly. Consequently, commanders might make fatal decisions before verification teams debunk the fake footage. Intelligence officers also fear that disinformation flurries overwhelm civilian alert systems during hurricanes or wildfires.

In contrast, domestic extremists exploit Deepfake Video to incite local violence or target officials. New Jersey responded by criminalizing deceptive synthetic media, yet cross-border enforcement remains elusive. Moreover, the UN’s ITU urged global watermark standards, arguing fragmented rules leave gaps exploitable by adversaries. Nevertheless, experts stress that technical defenses must complement diplomatic pressure and resilient public communication channels. Together, these developments reveal multi-level vulnerabilities for National security planners. The next section unpacks how toolchains amplify propaganda creation.

Deepfake Video Toolchains Rise

Modern pipelines merge text prompts, diffusion generation, voice cloning, and rapid caption translation. Furthermore, preset templates on CapCut let novices customize campaign logos in seconds. Runway and Synthesia offer browser interfaces where drag-and-drop assets yield polished political ads. Consequently, the skills barrier collapses, broadening the pool of actors capable of manufacturing fake footage. For attackers, cost per clip often falls below one dollar, enabling spam-style narrative flooding. Therefore, defenders must anticipate mass video dumps rather than isolated releases. Toolchain integration accelerates both creation and distribution. Next, we examine specific tactics propagandists deploy using these systems.

Tactics Behind Fake Footage

Researchers catalog four dominant deception patterns. First, adversaries craft counterfeit news segments featuring AI anchors and forged network logos. Second, staged riot montages depict violent crowds at nonexistent polling stations. Third, impersonation clips fabricate leader endorsements or confessions using cloned voices. Fourth, asset laundering seeds vague videos across dummy accounts to nurture false consensus.

- 70M Veo 3 videos produced within two months of launch

- Eight-second limit expanded to 1080p formats by September 2025

- NIST detection benchmarks still below 90% accuracy on new datasets

Deepfake Video packages these patterns into emotionally charged micro-stories that feel authentic. Moreover, timing is crucial because audiences often encounter fake footage during chaotic breaking news windows. Consequently, fact-checkers lag behind virality curves, allowing narratives to crystalize emotionally. Hany Farid warns that even satire morphs into weaponized misinformation once context strips away disclaimers. Attacks scale horizontally; dozens of variants emerge, each tweaked to evade hash based detection. These tactics highlight asymmetric advantages favoring propagandists. However, advancing detection technologies aim to narrow the gap, as discussed below.

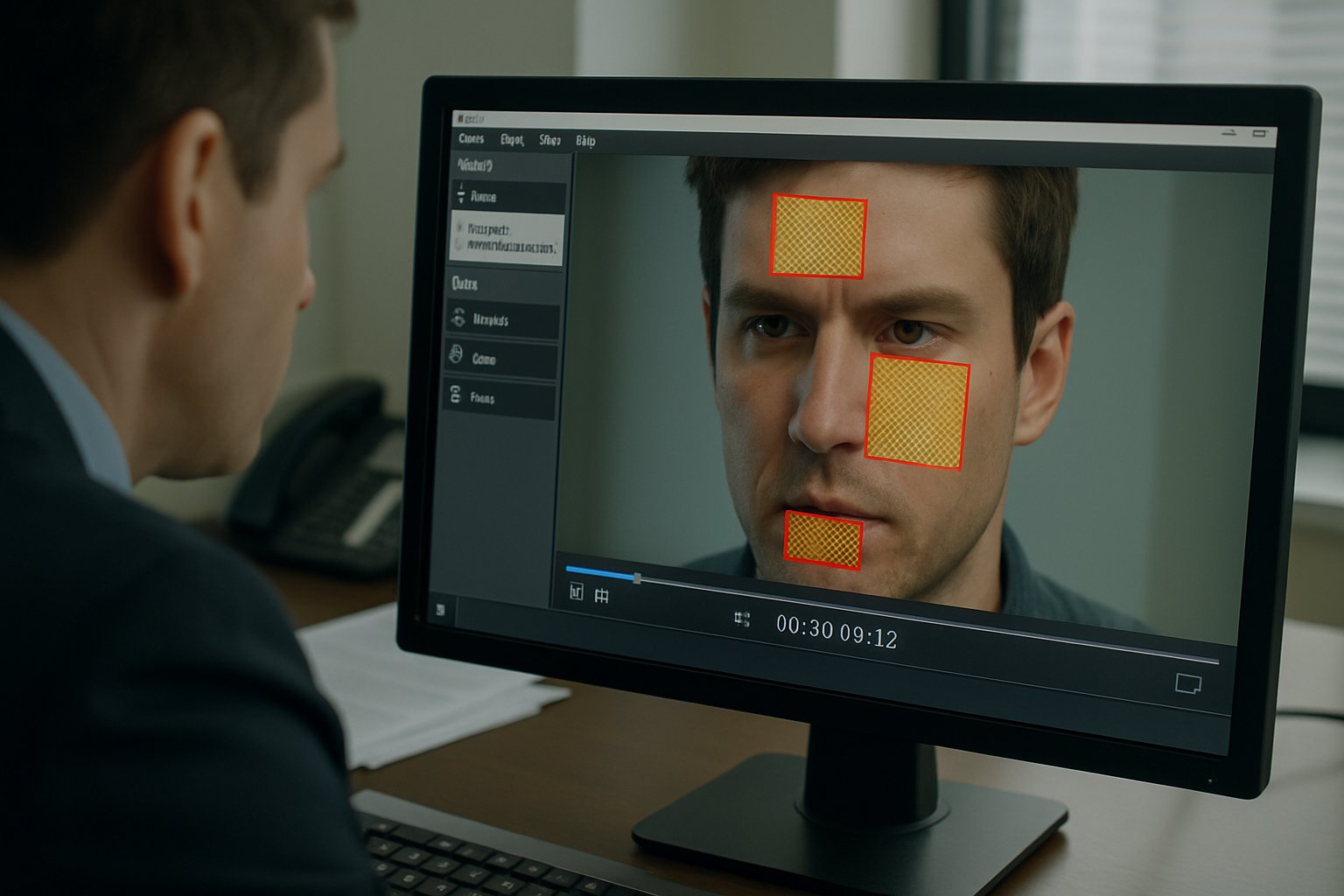

Detection Still Lags Behind

Computer scientists battle an adversarial race against generative models. NIST’s OpenMFC leaderboard shows algorithms misclassify many compression-altered samples. Moreover, invisible watermarks like SynthID vanish when attackers crop or re-encode assets. Consequently, investigators rely on multimodal cues such as lip-audio drift and inconsistent lighting. However, those cues grow subtler as diffusion models improve temporal coherence. Deepfake Video detectors therefore require continuous retraining and shared evaluation datasets. Professionals can enhance their expertise with the AI+ Researcher™ certification.

Additionally, Microsoft promotes provenance metadata standards to keep source details intact across platforms. Some vendors now embed on-device alerts whenever a Deepfake Video frame appears on screen. Yet, until platforms embed verification by default, viewers must weigh evidence manually before granting trust. Consequently, newsroom workflows now mandate frame-level forensic passes on suspicious submissions. Detection science is advancing but remains reactive. The policy environment therefore becomes a parallel battleground, explored next.

Policy Responses Remain Patchy

Legislators scramble to draft rules that withstand constitutional and technical scrutiny. New Jersey’s 2025 statute criminalizes deceptive distribution within state boundaries. Meanwhile, federal proposals like the NO FAKES Act seek broader coverage yet face lobbying pushback. Internationally, the UN urges universal provenance protocols, but adoption remains voluntary.

Consequently, enforcement differs across jurisdictions, inviting forum shopping by malicious actors. Moreover, industry self-regulation relies on voluntary watermark pledges lacking binding penalties. Current laws create a deterrent signal but fail to guarantee removal speed. Therefore, rebuilding public trust requires complementary social strategies.

Building Public Trust Online

Ultimately, audiences decide whether manipulated content gains traction. Media-literacy campaigns teach users to pause, source, and verify before sharing. Additionally, emergency alert systems now integrate rapid clarification channels from verified agencies. Platforms experiment with visible provenance badges that signal original upload authenticity. However, badges lose impact if designs vary widely or if hoaxers spoof them.

Therefore, cross-platform alignment on design standards would reinforce user trust at scale. Experts also recommend proactive transparency reports detailing Deepfake Video takedowns and detection accuracy. Civil society groups host livestream workshops decoding Deepfake Video narratives in real time. Moreover, investors increasingly weigh governance metrics, punishing firms that ignore synthetic content risks.

- Publish near-real-time detection precision statistics.

- Adopt interoperable watermark schemas.

- Fund open forensic research challenges annually.

Consequently, coordinated action across government, academia, and industry strengthens collective resilience. Sustained collaboration nurtures a culture of evidence based belief. The concluding section distills these insights into actionable priorities.

Generative video tools will only grow sharper and cheaper. Consequently, the window for fact checking before harm emerges will continue shrinking. National security circles, newsroom editors, and platform engineers must coordinate rather than operate in silos. Technical detection, resilient policy, and public education form a necessary tripod response.

Moreover, continuous skill building through recognised programs keeps teams ahead of threat evolution. Readers seeking deeper analytical capability should pursue the linked AI certification and share lessons widely. By integrating safeguards early, organisations strengthen public trust and protect information integrity. The stakes demand decisive action rather than retrospective regret.