AI CERTs

1 month ago

Automated HR Bias Sparks Legal and Regulatory Shake-Ups

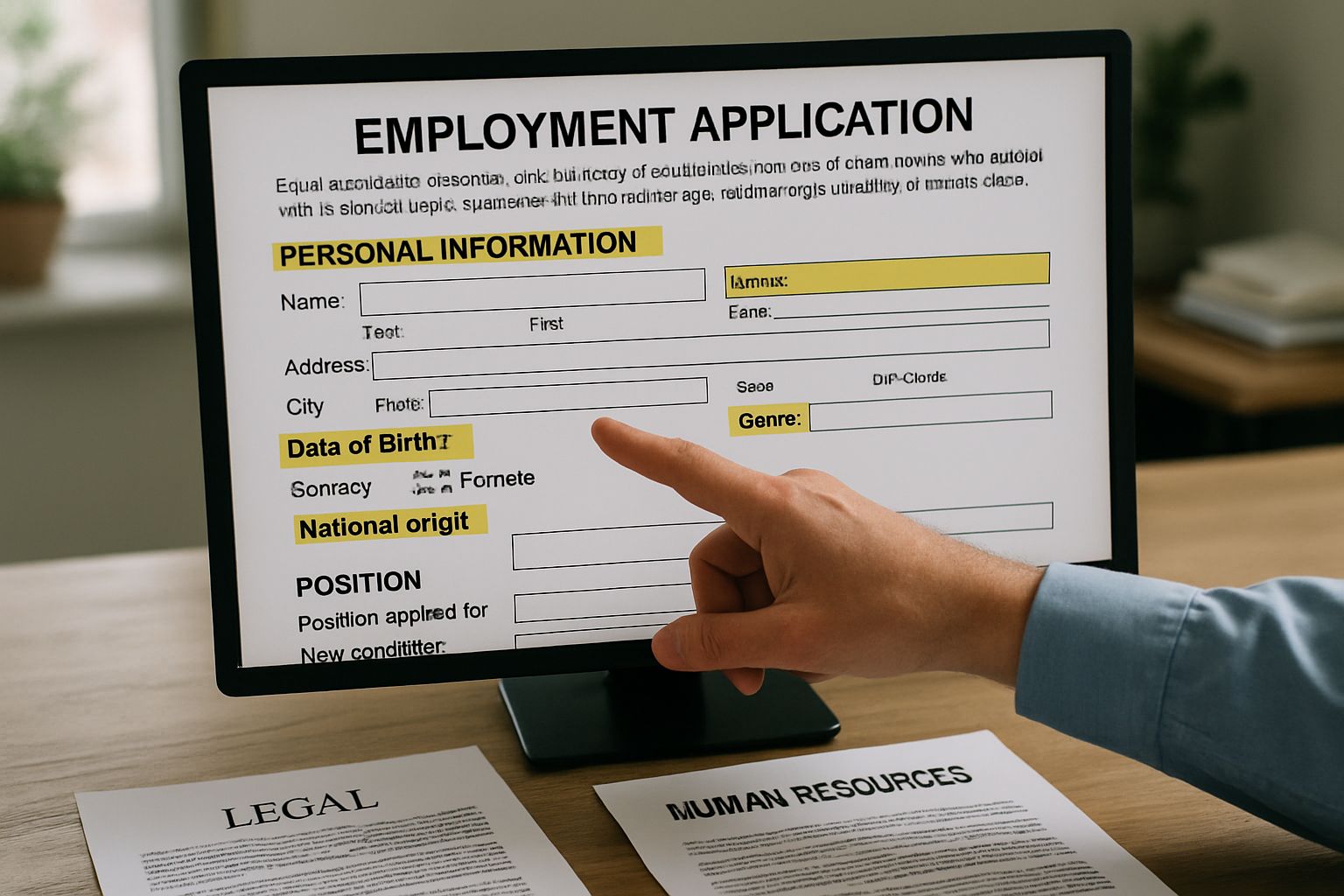

Hiring managers now rely on software that screens millions of résumés each day. However, a legal and reputational storm surrounds these tools as evidence of Automated HR Bias mounts. Industry leaders must understand the rising risks before algorithms decide who even reaches a human recruiter.

Recent lawsuits against Workday, Intuit, and Aon reveal systemic discrimination claims targeting algorithmic assessments. Consequently, regulators and activists argue that unchecked code threatens basic Employment rights and market reputation. This article examines where Automated HR Bias originates, who faces liability, and how organizations can still hire fairly.

Moreover, public opinion data show 71% of Americans oppose AI making final hiring calls. Recruitment teams must therefore weigh efficiency promises against Equality obligations and brand trust. The following sections outline the litigation landscape, regulatory momentum, vendor exposure, and concrete mitigation strategies.

Automated HR Bias Litigation

The Mobley v. Workday class action marks a pivotal test for algorithmic screening liability. In February 2026, a federal judge approved notice to older applicants allegedly rejected by optional filters. Earlier discovery orders already forced Workday to share client lists, expanding exposure for many employers.

Meanwhile, the ACLU filed complaints against Intuit and HireVue for race and disability bias in video interviews. Moreover, an FTC filing targets Aon’s assessments, calling marketing claims of "bias free" deceptive. Together, these cases allege disparate impact and disparate treatment under Title VII, ADA, and ADEA.

- Workday age discrimination notice reaches applicants rejected since 2019.

- Intuit complaint highlights deaf candidate blocked by video Algorithms.

- FTC review of Aon may set vendor marketing precedent.

These lawsuits demonstrate Automated HR Bias allegations can quickly snowball into multimillion-dollar class actions. Consequently, legal exposure now extends beyond employers to software vendors themselves, setting up broader accountability. Next, regulators are moving quickly to codify those accountability expectations.

Regulatory Forces Intensify Rapidly

Federal agencies have sharpened guidance on AI hiring and Employment since 2023. In contrast, the EEOC now advises routine disparate-impact testing and documentation for every automated screen. Furthermore, the FTC warns vendors against marketing tools as bias free without empirical validation.

State regulators add another layer, with Colorado and California exploring audit mandates for Recruitment technologies. Meanwhile, the OFCCP reminds federal contractors that Equality obligations remain unchanged even when Algorithms make preliminary decisions. Noncompliance risks contract loss, civil penalties, and public naming in agency press releases.

Regulatory momentum signals that Automated HR Bias defenses based on novelty will no longer suffice. Therefore, governance programs must evolve before lawmakers finalize audits and transparency statutes. Vendor liability trends further magnify that urgency, as the next section explains.

Vendor Liability Expands Quickly

Courts are reconsidering the traditional shield that technology suppliers once enjoyed. Recent rulings treat hiring platforms as agents, exposing them to direct discrimination claims. Moreover, discovery orders now compel vendors to disclose client lists and algorithmic documentation.

Lawyers note that agency theory lowers plaintiffs’ burden because employers and vendors can both pay damages. Nevertheless, proactive audits and bias mitigation reports can limit punitive findings. Professionals can enhance their expertise with the AI Data Robotics™ certification to understand audit design.

Liability expansion transforms Automated HR Bias from an abstract threat into a board-level financial risk. Consequently, procurement contracts must allocate validation duties and indemnities explicitly. Growing public distrust compounds those contractual stakes, as the following section shows.

Public Trust Erodes Fast

Pew Research reports that two-thirds of adults would avoid jobs filtered by AI. In contrast, only 22% feel comfortable with machines deciding hiring outcomes. Corporate reputations therefore hinge on transparent explanations of Algorithms and accessible application processes.

High-profile stories, including Amazon’s scrapped résumé sorter, illustrate how bias narratives move rapidly online. Moreover, diversity-minded investors increasingly question technology governance during shareholder meetings. Recruitment marketers now track social sentiment to flag viral complaints early.

Trust deficits amplify Automated HR Bias headlines and invite tougher regulators. Therefore, communication teams must partner with HR and legal to craft plain-language disclosures. Those disclosures support the concrete mitigation practices discussed next.

Mitigation Strategies For HR

First, map every automated touchpoint across the Employment lifecycle, from sourcing to onboarding. Subsequently, run disparate-impact analyses comparing selection rates across protected groups at each stage. Use independent statisticians to validate results and recommend threshold adjustments.

- Retain human reviewers for borderline cases and ADA accommodations.

- Document feature engineering to avoid proxy attributes like zip code.

- Schedule quarterly audits and include vendor updates.

Additionally, embed accessible design by offering text alternatives to video prompts and extended time allowances. Recruitment success metrics should include Equality outcomes, not just time-to-hire or cost savings. Finally, train hiring managers on ethical AI basics and incident escalation.

Consistent application of these practices reduces Automated HR Bias exposure and strengthens candidate experience. Moreover, proactive programs create evidence trails valued by regulators and courts. With frameworks in place, leaders can look toward strategic roadmaps described in the final section.

Actionable Steps Moving Forward

Begin with a cross-functional task force that pairs legal, data science, and employee representatives. Set quarterly goals for audit completion, vendor renegotiation, and public disclosure rollout. Meanwhile, monitor pending legislation and agency guidance to adjust controls proactively.

Allocate budget for third-party fairness testing and platform explainability features. Consequently, investors and candidates will see concrete commitments rather than aspirational slogans. After each milestone, share metrics externally to rebuild trust eroded by Automated HR Bias stories.

Strategic governance converts compliance chores into competitive advantages. Therefore, early movers will shape industry standards and sway regulators. The conclusion recaps essential insights and offers a clear call to action.

Algorithmic hiring is no longer a futuristic experiment but a daily business reality. However, recent litigation, tightening regulations, and skeptical public sentiment expose serious financial and ethical stakes. We reviewed how Automated HR Bias allegations now target both employers and vendors under evolving agency doctrines. Regulators demand documented testing, accessible design, and ongoing human oversight. Meanwhile, courts increasingly compel disclosure of client lists and statistical evidence, raising discovery costs. Proactive mitigation—audits, transparent communication, and continuous learning—offers the best defense and strongest branding boost. Act today by mapping tools, training teams, and pursuing recognized credentials that elevate governance excellence.