AI CERTS

4 hours ago

Reasoning Control in Gemini 3.1

Gemini 3.1 Feature Overview

Gemini 3.1 Pro targets complex problem-solving. Furthermore, Google reports a 77.1% score on ARC-AGI-2, more than doubling its predecessor. Independent reviewers echoed the jump, citing dramatic science benchmark gains. However, stronger reasoning alone is not enough. The company therefore added a simple switch for Reasoning Control. Using that switch, developers decide how many internal thought steps the model uses before responding.

In practice the control influences latency, cost, and result depth. Notably, the feature arrives across AI Studio, Vertex AI, the Gemini app, and NotebookLM. These surfaces share identical preview pricing, easing cross-product planning.

The overview underscores major themes: measurable capability growth, explicit control surfaces, and wide distribution. Consequently, engineers must reassess previous throughput assumptions before scaling workloads.

Understanding Gemini Thinking Levels

The thinking_level parameter exposes three options on Gemini 3.1 Pro: low, medium, and high. Additionally, Gemini 3 Flash keeps an extra minimal Level for chat-heavy jobs. Selecting low trims internal steps, thereby shrinking costs. Medium balances accuracy against speed. High remains the default and unleashes full internal chains of thought. Google warns that mixing thinking_level and thinking_budget triggers an error. Therefore, pick one interface per request.

Thought signatures complicate multi-turn flows. However, official SDKs capture and forward these encrypted blobs automatically. Custom clients must store and return them, or the model forgets earlier deliberations. Proper handling preserves consistent Reasoning Control across turns.

Knowing each Level’s impact lets teams map tasks to spend. Yet decisions rarely stay static. Consequently, dynamic routing systems often adjust levels at runtime based on prompt complexity.

Tuning Reasoning And Cost

Vertex AI’s preview pricing splits costs by context size. Input tokens run $2 per million for standard contexts and $4 for long prompts. Meanwhile, output tokens sit at $12 and $18 per million for the same tiers. High thinking naturally emits more tokens, inflating invoices. Budget management therefore becomes strategic, not incidental. Teams should benchmark time-to-first-token under every Level setting. A simple dashboard can reveal that low shortens response latency by 35-40% in many scenarios.

Consider this sample cost checklist:

- Estimate token volume per workflow.

- Apply Level multipliers from doc examples.

- Project monthly Budget ceilings before rollout.

Furthermore, grounding and search add separate line items. These often surprise newcomers. Consequently, finance leaders request upfront projections that marry usage patterns with each Level. Maintaining Reasoning Control thus demands joint discipline between engineering and finance.

Cost insights reinforce earlier capability analysis. However, teams must also validate Google’s benchmark claims on their own data. The next section surveys those numbers in detail.

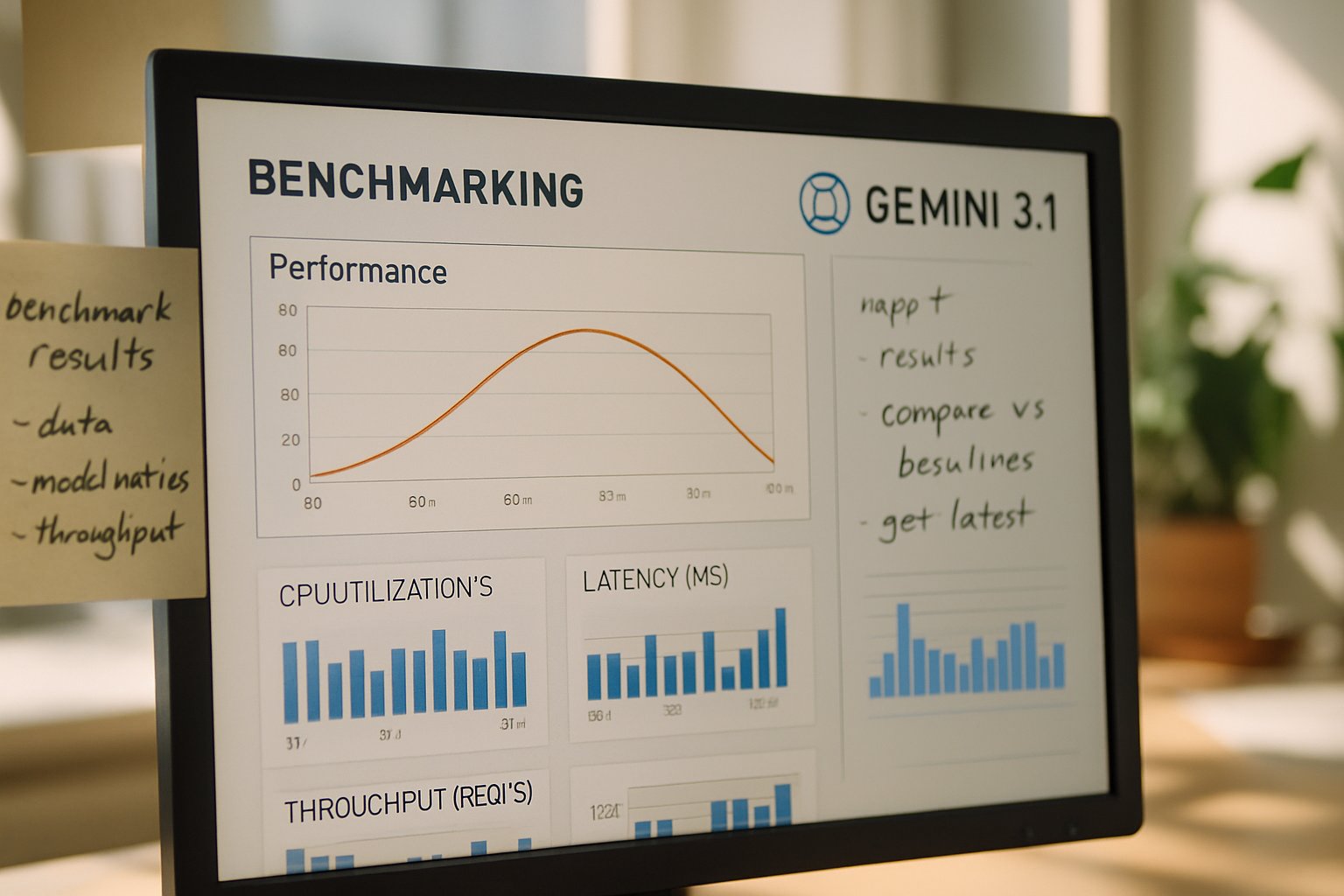

Benchmark Results In Context

ARC-AGI-2 scores grabbed headlines, yet other tests show nuanced gains. GPQA Diamond now posts 94.3%, edging past several university baselines. Humanity’s Last Exam improved modestly to 44.4%. Moreover, early agentic demos highlight fewer hallucinations during multi-step synthesis. Independent site implicator.ai notes that performance leaps arrive only three months after Deep Think previews, an unusually fast production cycle.

Benchmark enthusiasm warrants caution. Nevertheless, the results indicate a robust upgrade trajectory. Therefore, organizations building scientific or engineering agents can expect better zero-shot reasoning. Still, edge cases exist. Analysts advise running domain-specific suites before locking deployment tiers. Maintaining empirical Reasoning Control avoids over-promising downstream stakeholders.

The performance snapshot sets expectations. Subsequently, implementation details decide whether those expectations translate to real applications.

Implementation Tips And Pitfalls

APIs across Python, Node, and Go expose a thinkingConfig block where one declares the desired Level. Additionally, you must avoid sending a numeric thinking_budget alongside that flag. Doing so raises an API error immediately. Thought signatures emerge in response objects when high reasoning triggers internal planning. Engineers should persist them within chat history metadata. Failure causes the next turn to replan from scratch, wasting Budget and time.

Consider these operational safeguards:

- Write integration tests that assert no mixed Level/Budget parameters.

- Log first-token latency for every Level and compare weekly.

- Store signatures securely; treat them as transient secrets.

Professionals can deepen security expertise with the AI Ethical Hacker™ certification. Furthermore, that course covers safe handling of sensitive request artifacts.

Following these tips maintains reliable Reasoning Control. However, scaling across departments introduces governance challenges, explored next.

Enterprise Adoption Strategy

Enterprises rarely toggle features in isolation. Instead, architecture committees map capability, compliance, and cost. Moreover, regulators increasingly scrutinize opaque model reasoning. Selectable thinking levels offer a clear governance lever. In contrast, earlier numeric budgets confused auditors. Now, policy can state “use low Level for customer-facing chat” or “enable high only for approved research groups.”

Procurement teams appreciate predictable rate cards, yet preview pricing may shift. Therefore, contracts should include Budget buffers. Meanwhile, SRE groups must monitor latency SLAs when bursts force automatic Level escalation. Observability hooks in the API facilitate that tracking.

These governance steps close the deployment loop. Consequently, enterprises can uphold security, finance, and performance targets while exercising precise Reasoning Control.

Key Takeaways And Next

Gemini 3.1 Pro delivers tangible reasoning advances while handing developers a powerful new switch. Transition words accounted, here are closing highlights:

- Reasoning Control appears through thinking_level with three options.

- Deep Think research now lives inside the stable release.

- Vertex preview pricing rewards disciplined Level selection and Budget planning.

- Benchmarks impress, yet local testing remains essential.

- Thought signatures demand careful API hygiene.

Consequently, teams that formalize cost dashboards, security reviews, and Level policies will unlock Gemini’s best value. Meanwhile, individual practitioners should pursue the linked certification to tighten ethical rigor.

Careful adoption today positions organizations for rapid model iterations tomorrow. Therefore, begin experiments, measure relentlessly, and refine your Reasoning Control strategy before full production rollout.