AI CERTS

2 months ago

Model Prompt Engineering Review of Claude Sonnet 5 Fennec

This article dissects the evidence, weighs the Rumors, and maps next steps for technical teams.

Leak Origins Explained Clearly

Initially, developer Pankaj Kumar posted a blurred image showing the string “claude-sonnet-5@20260203” alongside “Fennec.”

However, the screenshot captured a 404 error, indicating the model was not publicly enabled.

Independent bloggers compared the leak to past Vertex staging artifacts that never reached Release status.

The evidence stems from one unverified screenshot.

Consequently, claims of a finished Release remain premature.

Meanwhile, deeper analysis of speculation is essential.

Evidence Versus Speculation Analysis

Rumors multiplied as aggregator sites copied each other within hours of the initial post.

Moreover, many outlets presented benchmark numbers without citing any reproducible testing.

In contrast, experts in Model Prompt Engineering noted that no system card or commit hash accompanied those claims.

- SWE-bench Performance allegedly above 80%.

- Context window expanded to one million tokens.

- Pricing projected at 50% below Sonnet 4.5.

Nevertheless, none of these bullets link to original logs, whitepapers, or benchmark repositories.

Speculation outran verifiable facts within a single day.

Therefore, readers must separate signals from noise.

Next, infrastructure clues offer stronger context.

Infrastructure Context And Plausibility

Anthropic recently expanded TPU access through Google, scaling to one million chips according to an October announcement.

Consequently, observers argue that a larger Sonnet model could be trained and served economically.

Fennec as a codename fits Anthropic naming patterns that reference agile mammals and birds.

However, infrastructure capability alone does not confirm Model Prompt Engineering breakthroughs promised by the leaks.

The TPU partnership makes scale plausible.

Nevertheless, hardware availability is not a launch confirmation.

Subsequently, benchmark assertions deserve close inspection.

Benchmark Claims Under Scrutiny

SWE-bench Verified ranks models by real repository bug fixes, providing hard numbers for Performance.

Therefore, any model exceeding 80% would surpass current public leaders like Opus 4.5 and GPT-4 Turbo.

Experts in Model Prompt Engineering searched the official leaderboard yet found no entry for Sonnet 5.

Meanwhile, Anthropic has not published a system card documenting test methodology or safety mitigations.

In contrast, the circulating numbers trace back to blurred spreadsheets lacking source signatures.

No benchmark record supports the lofty numbers.

Consequently, Performance claims remain anecdotal until verified.

Next, we explore industry ramifications.

Potential Industry Impact Scenarios

If true, cheaper high Performance could pressure pricing across Anthropic rivals and cloud providers.

Moreover, a million-token context might redefine collaborative coding agents and long document workflows.

Teams skilled in Model Prompt Engineering would rapidly integrate such capacity into existing pipelines.

- Accelerated feature shipping for consumer apps.

- Shift of compute spend toward TPUs.

- Raised compliance scrutiny for code generation.

Nevertheless, these outcomes rely on a confirmed Release and stable supply economics.

Industry winners depend on actual capabilities.

Therefore, decision makers need verified data.

Subsequently, stakeholders should pursue concrete checks.

Verification Steps For Stakeholders

Firstly, contact Anthropic’s press desk and request an embargoed brief.

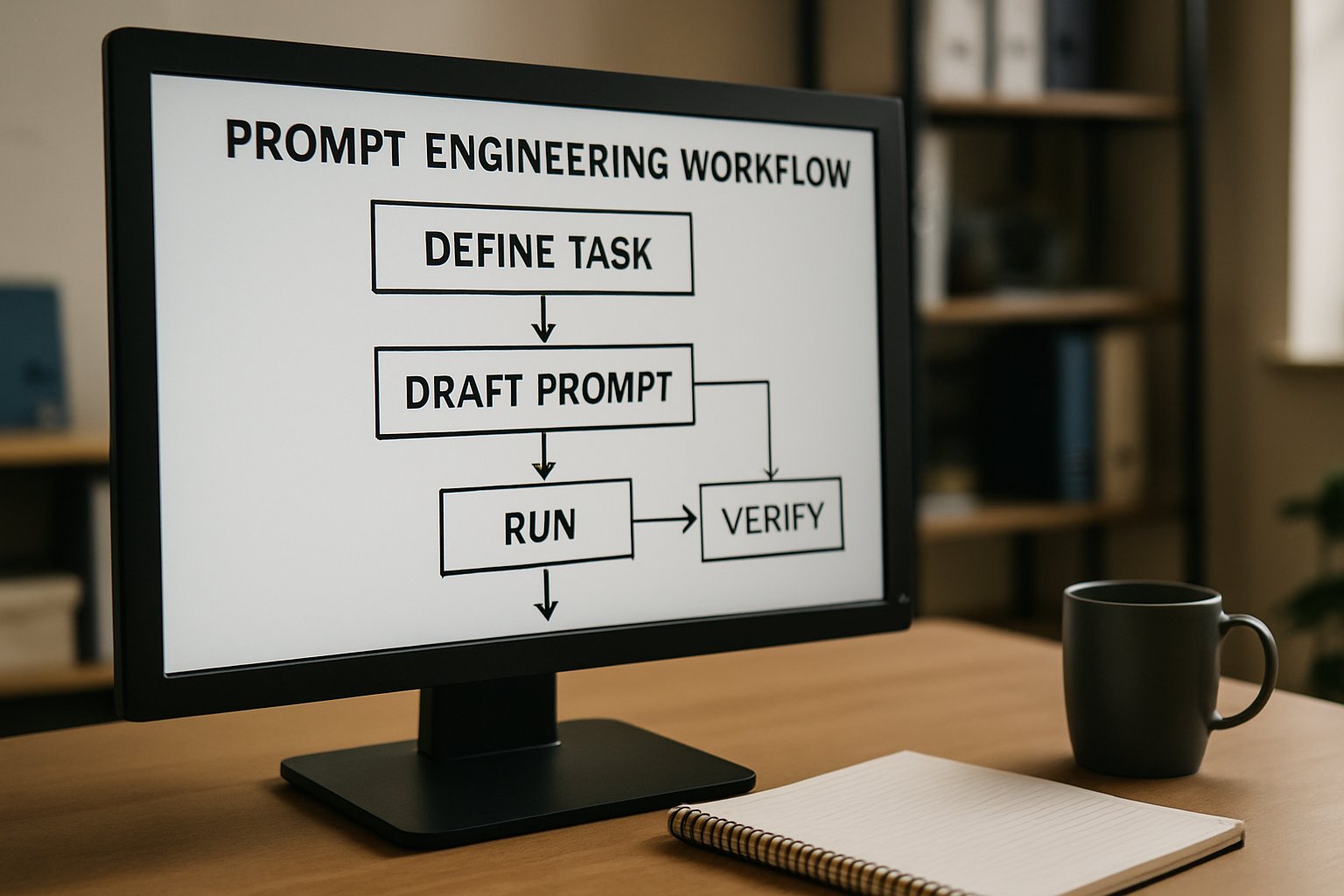

Secondly, document any Model Prompt Engineering experiments with timestamped logs and unit tests.

Additionally, query the SWE-bench maintainers about pending submissions referencing Fennec.

Meanwhile, archive the original Vertex call, including headers and request bodies for reproducibility.

Professionals can enhance their expertise with the AI Prompt Engineer™ certification to standardize investigation methods.

Clear procedures reduce rumor amplification.

Consequently, evidence quality improves across teams.

Finally, we distill strategic lessons.

Strategic Takeaways And Outlook

Model Prompt Engineering success depends on disciplined verification, not viral headlines.

In contrast, infrastructure leaks offer only partial visibility into shipping schedules and governance.

Therefore, organizations should wait for Anthropic to publish an official Release note before budgeting workloads.

Meanwhile, continued practice in Model Prompt Engineering will prepare teams for whichever model lands first.

Consistent Model Prompt Engineering drills also clarify benchmark baselines, making delta analysis easier when verified Performance data appears.

Ultimately, enterprises that institutionalize Model Prompt Engineering standards will respond fastest to any validated Claude evolution.

The path forward favors disciplined experimentation.

Nevertheless, patience remains vital until confirmations arrive.

Consequently, the closing section summarizes key actions.

Leaked Vertex strings sparked intense debate in early February.

However, one screenshot cannot certify a public model.

Anthropic silence, absent benchmarks, and recycled Rumors underline the uncertainty.

Nevertheless, TPU capacity and past cadence suggest progress continues behind the curtain.

Therefore, leaders should verify, document, and budget only after data appears.

Consequently, now is the moment to sharpen prompt craft, rehearse verification drills, and upskill teams.

Professionals eager to lead the next wave should pursue the AI Prompt Engineer™ credential, stay tuned to Anthropic channels, and subscribe for ongoing analysis.